How to Run a Technology Scouting Program: A Step-by-Step Guide for Growing Companies

You already know your company needs to stay on top of emerging technology. The question is how to actually do it when you don't have a team of analysts, a dedicated scouting budget, or forty hours a week to spend on research.

Most technology scouting guides are written for enterprise innovation teams with dedicated headcount and established processes. This one is written for the innovation manager, digital transformation lead, or strategy director at a growing company who has been handed the scouting responsibility alongside everything else they already own.

The good news is that running a real technology scouting program does not require a team. It requires a system. And in 2026, the tools available to a one-person scouting operation are more powerful than what most enterprise teams had five years ago.

This guide covers exactly how to build and run that system — from defining what you are looking for through finding vendors, evaluating them consistently, and turning the best ones into pilots that actually produce decisions.

The Definition

Technology scouting is the structured, repeatable practice of identifying, assessing, and tracking emerging technologies and vendors that are relevant to your organization's strategic priorities — before you need them, not after a competitor has already acted on them.

The word structured is the one that separates scouting from browsing. Browsing is reading TechCrunch, attending conferences, and fielding inbound vendor pitches. It produces noise. Structured scouting produces a shortlist of relevant, assessed candidates that your organization can act on with confidence.

The word repeatable is the one that separates a program from a one-time project. A one-time scouting effort answers a specific question and is done. A program builds organizational intelligence over time — so every scouting cycle makes the next one faster and more informed.

Why Growing Companies Need Scouting More Than They Think

The instinct at most growing companies is to wait until a technology problem is urgent before going looking for solutions. The vendor evaluation happens under time pressure, with incomplete information, driven by whoever sent the most compelling inbound pitch rather than by a structured view of what is actually available in the market.

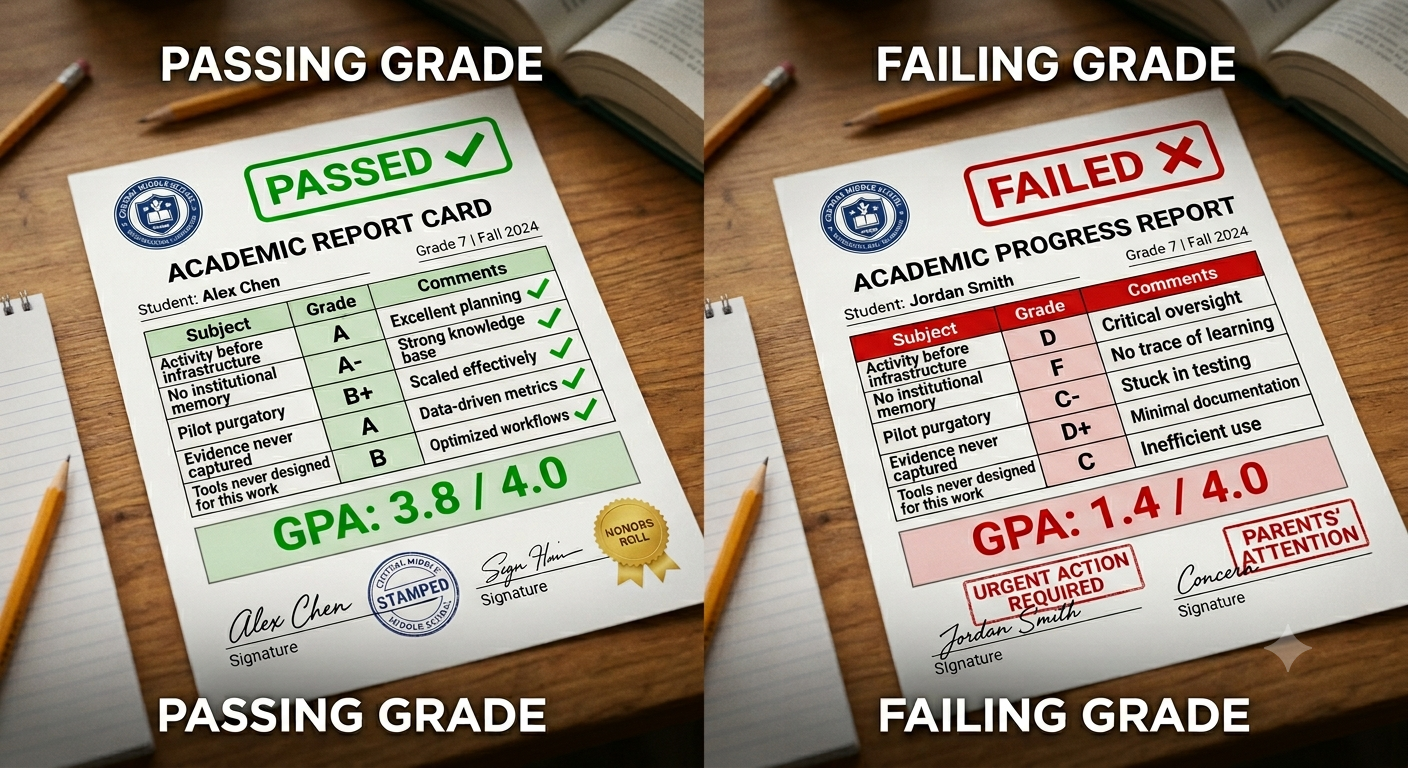

This reactive approach has three consistent failure modes.

You miss the best options. The most relevant technology for your specific problem is rarely the one with the biggest marketing budget or the best conference presence. It is often a company two or three years into building exactly what you need — that you would never find through inbound unless you were actively looking.

You compress the evaluation timeline. A technology evaluation that happens under deadline pressure produces worse decisions than one that happens with adequate time to compare options, run reference checks, and structure a proper pilot. Scouting in advance of urgency is what creates the time to evaluate well.

You lose institutional memory. Every evaluation your team runs — even the ones that end in a no — produces organizational intelligence about what is available in a category, what the key differentiators are, and what the common failure modes look like. Without a system to capture that intelligence, every new evaluation starts from scratch.

A structured scouting program solves all three problems simultaneously.

Step 1: Define Your Scouting Priorities

The first step is the one most small teams skip — and it is the one whose absence causes every downstream problem.

A scouting priority is not a technology category. It is a business problem statement paired with a strategic context. The difference matters because it determines what you are evaluating vendors against.

A technology category: "AI-powered quality control"

A scouting priority: "We need to reduce defect rates in our packaging line by at least 15% within 12 months without significant capital expenditure on new equipment. AI-powered computer vision solutions that integrate with our existing line infrastructure are the primary area to explore."

The second version tells you what you are looking for, what success means, what constraints apply, and why this is a priority now. It gives every vendor you evaluate a consistent bar to be measured against.

For a one-person or small team scouting operation, two to four active scouting priorities at any given time is the right scope. More than that and the program becomes a monitoring exercise with no evaluation depth. Fewer than that and you are not building the organizational intelligence that makes scouting compound over time.

How to define your priorities:

Start with business unit inputs. The problems worth scouting are the ones that operational leaders have identified as material — not the technologies that look interesting in the trade press. Spend thirty minutes with each relevant business unit leader asking: what is slowing you down, what is costing you money, what would you do differently if you had the right technology partner?

Then filter by strategic fit. Not every business problem is worth a scouting effort. The ones worth scouting are the ones where external technology is likely to be the answer — where building internally is too slow or too expensive, and where the market has enough activity to suggest solutions exist.

Capture the output in writing. A one-paragraph brief for each priority — problem statement, context, constraints, success definition — is the foundation that every subsequent evaluation step builds on.

Step 2: Build Your Scouting Methodology

A scouting methodology is the repeatable process you use to find, assess, and track vendors for each priority. Having a methodology means you do not reinvent the process each time — you apply the same framework to each new priority, which makes every cycle faster.

A practical methodology for a small team has four components.

Continuous monitoring. A passive scanning process that keeps you aware of new entrants and significant developments in your priority categories without requiring active research time every week. In practice this means setting up structured alerts for funding announcements, product launches, and category news — and reviewing them on a regular cadence rather than reactively.

Active discovery. A periodic active search for vendors in each priority category — going beyond what the monitoring process surfaces to specifically seek out companies you might not have encountered through passive scanning. This is where AI-powered scouting tools change the equation most dramatically for small teams.

Structured assessment. A consistent evaluation framework applied to every vendor you consider seriously — covering the same dimensions in the same way so that assessments are comparable across candidates and over time.

Pipeline tracking. A system of record that captures every vendor you have assessed, where they stand, what you found, and what the next action is — so nothing falls through the cracks and the institutional memory of your scouting program persists regardless of team changes.

Step 3: Use AI to Scale Your Discovery

This is where the economics of small-team scouting have changed most dramatically in the last two years.

A manual discovery process for a single technology category — searching databases, reading analyst reports, attending webinars, reviewing inbound pitches — can consume ten to twenty hours per priority. For a one-person team with two to four active priorities, that is a significant portion of available time before any actual evaluation work begins.

AI-powered scouting compresses that timeline to minutes.

The practical approach: describe what you are looking for in plain language — the problem you are trying to solve, the technical approach you are exploring, the operational context that matters — and let the AI surface a structured shortlist of relevant companies with profiles, funding data, customer references, and relevance scoring.

This is not the same as a Google search. A Google search surfaces companies with the highest SEO investment. AI-powered scouting surfaces companies with the highest relevance to your specific problem — including early-stage companies, niche specialists, and emerging players that would never appear in a Google search because they have not optimized for discoverability.

Traction AI enables exactly this — conversational scouting across any technology category, drawing on 50,000 curated Traction Matches plus full Crunchbase integration. Ask in plain language, receive a structured shortlist in minutes, with every result captured as a structured record in your scouting pipeline.

The output is not just faster discovery. It is better discovery — a broader view of the actual market than any manual process can produce, organized in a format that supports consistent evaluation rather than requiring additional synthesis work.

Step 4: Evaluate Consistently

Discovery finds the candidates. Evaluation determines which ones are worth pursuing. The most common failure mode in small-team scouting is inconsistent evaluation — different criteria applied to different vendors, making it impossible to compare across candidates or build on prior evaluations in future cycles.

A practical evaluation framework for a growing company covers five dimensions.

Strategic fit. Does this vendor's solution actually address the problem statement from your scouting priority brief? Not does it sound relevant — does it specifically address the constraint, context, and success definition you established in Step 1.

Technical readiness. Is the solution actually functional in an environment like yours — or is it a promising demo that requires significant development before it could operate in your context? For early-stage companies, what is the development roadmap and what is the realistic timeline to production readiness?

Operational fit. What does implementation actually require? What integrations are needed, what data access is required, what process changes would adoption involve, and what does ongoing support look like? The operational fit questions are the ones that most commonly kill otherwise promising vendors at the pilot stage.

Company viability. Is this a company you can build a relationship with? What is their funding situation, customer concentration, and support model? An innovative product from a company that will not survive the next 18 months is not a viable option regardless of how good the technology is.

Commercial terms. What does the cost structure look like at the scale you would actually deploy? What are the contract terms, what are the integration costs, and what is the total cost of ownership compared to your baseline?

Apply these five dimensions to every vendor you assess seriously. Capture the assessment as structured notes — not a slide deck, not an email thread, but a consistent record format that future evaluations can reference.

Step 5: Build Your Vendor Pipeline

A vendor pipeline is the living record of every company you have assessed, organized by priority category and evaluation stage. It is the difference between a scouting program and a series of disconnected research projects.

The pipeline has four stages:

Monitoring. Companies in a priority category that you are aware of but have not yet assessed. They stay in monitoring until a trigger — a new funding round, a product update, a reference from a trusted source — moves them to active evaluation.

Evaluating. Companies you are actively assessing against your evaluation framework. This stage should never have more than three to five companies per priority at any given time. More than that and the evaluation work becomes unmanageable for a small team.

Advancing. Companies that have passed initial evaluation and are being considered for a pilot or proof of concept. At this stage the conversation shifts from assessment to structuring — what would a pilot look like, what would success mean, what are the commercial terms?

Decision made. Companies for which a decision has been made — pilot approved, pilot declined, or deferred to a future cycle — with the rationale documented.

The pipeline is only useful if it is maintained. A pipeline that was accurate three months ago but has not been updated is worse than no pipeline at all — it creates false confidence about the state of your scouting work.

For a one-person team, fifteen minutes per week to update pipeline status and capture new assessment notes is sufficient to keep the system current. The compounding value comes from the consistency of capture, not the volume of weekly activity.

Step 6: Structure Pilots That Produce Decisions

The output of a scouting program is not a shortlist. It is a pilot that answers a specific question and produces a clear decision — advance to deployment or stop.

Most small-team pilots fail not because the technology does not work but because the pilot was never designed to produce a decision. It was designed to explore — which means it never reaches a conclusion.

A pilot that produces a decision has three things defined before it starts:

A specific question. Not "let's see if this works" but "does this solution reduce our packaging defect rate by at least 15% under normal production conditions within 90 days?" The question determines what evidence the pilot needs to produce.

Measurable success criteria. Defined in advance, agreed by all stakeholders, specific enough that a reasonable person would say yes or no based on the evidence at the end of the pilot. Vague success criteria produce pilot extensions. Specific criteria produce decisions.

A decision owner. One person who is accountable for making the go or no-go call at the end of the pilot based on the evidence. Without a decision owner, pilots drift into purgatory — technically still active, practically abandoned.

For a small team, running one to two active pilots at any given time is the right scope. More than that and the oversight required to run them well exceeds the available bandwidth.

Step 7: Capture What You Learn

The final step is the one that transforms a one-time effort into a program that compounds over time.

Every completed evaluation — whether it ends in a pilot or not — produces organizational intelligence that your next scouting cycle should start from. The vendor that was not ready 18 months ago may be ready now. The category that was too immature for a serious evaluation last year may have consolidated around two or three clear leaders. The failure mode you encountered in a pilot tells you exactly what to assess more rigorously in the next evaluation of a similar technology.

None of this intelligence is useful if it lives in a personal email archive or a slide deck that nobody can find. It has to be captured in a system of record that is accessible to everyone involved in scouting — and that persists regardless of who is in the role.

This is the institutional memory problem that technology scouting platforms solve structurally. When every assessment, every pipeline update, and every pilot outcome is captured as structured data in a system that the organization owns, the scouting program gets smarter with every cycle. When it lives in personal tools and email threads, it resets every time a team member changes.

What Changes When You Use a Purpose-Built Platform

A spreadsheet can track vendors. A project management tool can track pilots. A database can store company profiles. None of them can do what a purpose-built technology scouting platform does — which is connect all of these functions in a single system with AI operating across the full workflow.

With Traction:

Discovery is conversational. Ask in plain language for companies solving a specific problem and receive a structured shortlist with profiles, funding data, and relevance scoring in minutes — not hours or days.

Assessment is structured. Evaluation criteria are configured once and applied consistently to every vendor in a category — so assessments are comparable and the portfolio view is accurate.

Pipeline is automatic. Every vendor you assess is captured as a structured record. Status updates propagate through the pipeline. Nothing falls through the cracks.

Institutional memory is built in. Prior evaluations surface automatically when a new assessment begins in the same category. The organization does not start from scratch — it starts from what it already knows.

Pilots are governed. Success criteria, milestone tracking, stakeholder ownership, and outcome documentation are built into the workflow — so pilots produce decisions rather than drift.

And because there is no setup fee, no data migration charge, and no implementation project, the system is operational from the first evaluation. The institutional memory of your scouting program starts accumulating from day one.

Frequently Asked Questions

What is technology scouting?

Technology scouting is the structured, repeatable practice of identifying, assessing, and tracking emerging technologies and vendors relevant to your organization's strategic priorities — before you need them, not after a competitor has already acted. It is the difference between reactive vendor evaluation driven by inbound pitches and proactive discovery driven by your own problem statements and evaluation criteria.

How do you run a technology scouting program with a small team?

A small team can run an effective technology scouting program by focusing on four things: clearly defined scouting priorities tied to specific business problems, AI-powered discovery that extends reach beyond what manual research can cover, a consistent evaluation framework applied to every candidate, and a system of record that captures institutional memory across every evaluation cycle. The program does not require dedicated headcount — it requires a repeatable system and the right platform to run it on.

How many technology scouting priorities should a small team manage?

Two to four active scouting priorities at any given time is the right scope for a one-person or small team scouting operation. More than four and the evaluation depth suffers — the program becomes monitoring without assessment. Fewer than two and the program is not building the organizational intelligence that makes scouting compound over time.

What is the difference between technology scouting and vendor evaluation?

Technology scouting is the broader practice — identifying what exists in a category, monitoring the landscape, and building a pipeline of relevant candidates. Vendor evaluation is a stage within the scouting program — the structured assessment of specific candidates against defined criteria. Scouting without structured evaluation produces awareness but not decisions. Evaluation without ongoing scouting produces point-in-time decisions without the market context that makes them defensible.

How does AI improve technology scouting for small teams?

AI changes the economics of discovery most dramatically for small teams. Manual discovery for a single technology category can consume ten to twenty hours. AI-powered conversational scouting compresses that to minutes — describing the problem in plain language and receiving a structured shortlist of relevant companies with profiles, funding data, and relevance scoring. Beyond discovery, AI improves assessment quality by generating structured company snapshots on demand and surfacing prior evaluations of similar technologies at the point a new assessment begins.

How do you make sure a technology pilot produces a decision?

Define three things before the pilot begins: a specific question the pilot is designed to answer, measurable success criteria agreed by all stakeholders in advance, and a decision owner who is accountable for making the go or no-go call based on the evidence. Without all three, pilots drift into purgatory — technically active, practically abandoned. With all three, the pilot is a decision-making tool rather than an exploration exercise.

How long does it take to set up a technology scouting program?

With a purpose-built platform and no setup fee or implementation project, a scouting program can be operational from the first evaluation. The first step is defining your scouting priorities — which takes one to two hours of structured thinking. From there, the first discovery cycle can run the same day. The program matures over time as evaluation history accumulates and the institutional memory compounds — but it produces value from the very first assessment.

What is the biggest mistake small teams make in technology scouting?

Skipping the priority definition step. Most small teams jump straight to discovery — searching for interesting companies in a category — without first establishing a specific business problem statement, success criteria, and constraints. The result is a list of interesting vendors with no clear basis for comparison and no defined outcome the evaluation is working toward. Every downstream problem in the scouting process — inconsistent evaluation, inconclusive pilots, inability to report on program value — traces back to undefined priorities at the front end.

Related Reading

- How AI Is Transforming Technology Scouting: A Practical Guide for Enterprise Teams

- Technology Scouting for SMBs: How Smaller Companies Can Innovate Without an Innovation Team

- How Innovation Management Platforms Level the Playing Field for SMBs

- How One Innovation Management Platform Replaces an Innovation Team for SMBs

- Why Pilot Management Software Is the Missing Link in Innovation Execution

- The Technology Readiness Gap: Why Most Innovation Pilots Fail Before They Reach Production

- What Is Innovation Management? A Practical Definition for Enterprise Teams

About Traction Technology

Traction Technology is an AI-powered innovation management software platform trusted by Fortune 500 enterprise innovation teams including Armstrong, Bechtel, Ford, GSK, Kyndryl, Merck, and Suntory. Built on Claude (Anthropic) and AWS Bedrock with a RAG architecture, Traction manages the full innovation lifecycle — from technology scouting and open innovation through idea management, RFI management, and pilot management — with AI-generated Trend Reports, AI Company Snapshots, duplication detection, and decision coaching built in.

One annual subscription at $4,000 gives you the full capabilities of an enterprise innovation team — every module, every AI capability, and unlimited View-Only access for every stakeholder at no additional cost. No setup fee. No data migration charges. Recognized by Gartner. SOC 2 Type II certified.

Try Traction AI Free · View Pricing · Schedule a Demo · tractiontechnology.com

.webp)