The Technology Readiness Gap: Why Most Innovation Pilots Fail Before They Reach Production

Updated March 2026

Innovation teams are running more pilots than ever.

AI pilots. Automation pilots. Data pilots. Startup pilots.

And yet despite all that activity, very few of these pilots ever reach production.

This is not because the technology doesn't work. It's because most organizations underestimate a problem that only becomes visible after proof of concept — the technology readiness gap.

In 2026 the difference between innovation teams that scale impact and those that stall is not access to ideas or vendors. It is the ability to assess readiness early, consistently, and honestly.

The Definition

The technology readiness gap is the space between "this solution works in a controlled pilot" and "this solution can operate sustainably inside the enterprise" — the unresolved operational, governance, security, and ownership requirements that a successful proof of concept does not automatically satisfy.

Most innovation teams focus heavily on the first statement and assume the second will resolve itself later. It rarely does. The pilot succeeds technically. The enterprise deployment never happens. And the program loses credibility not because it failed to find promising technology but because it failed to move that technology from experimentation to production.

Innovation Pilots Don't Fail at the Technology — They Fail at the Transition

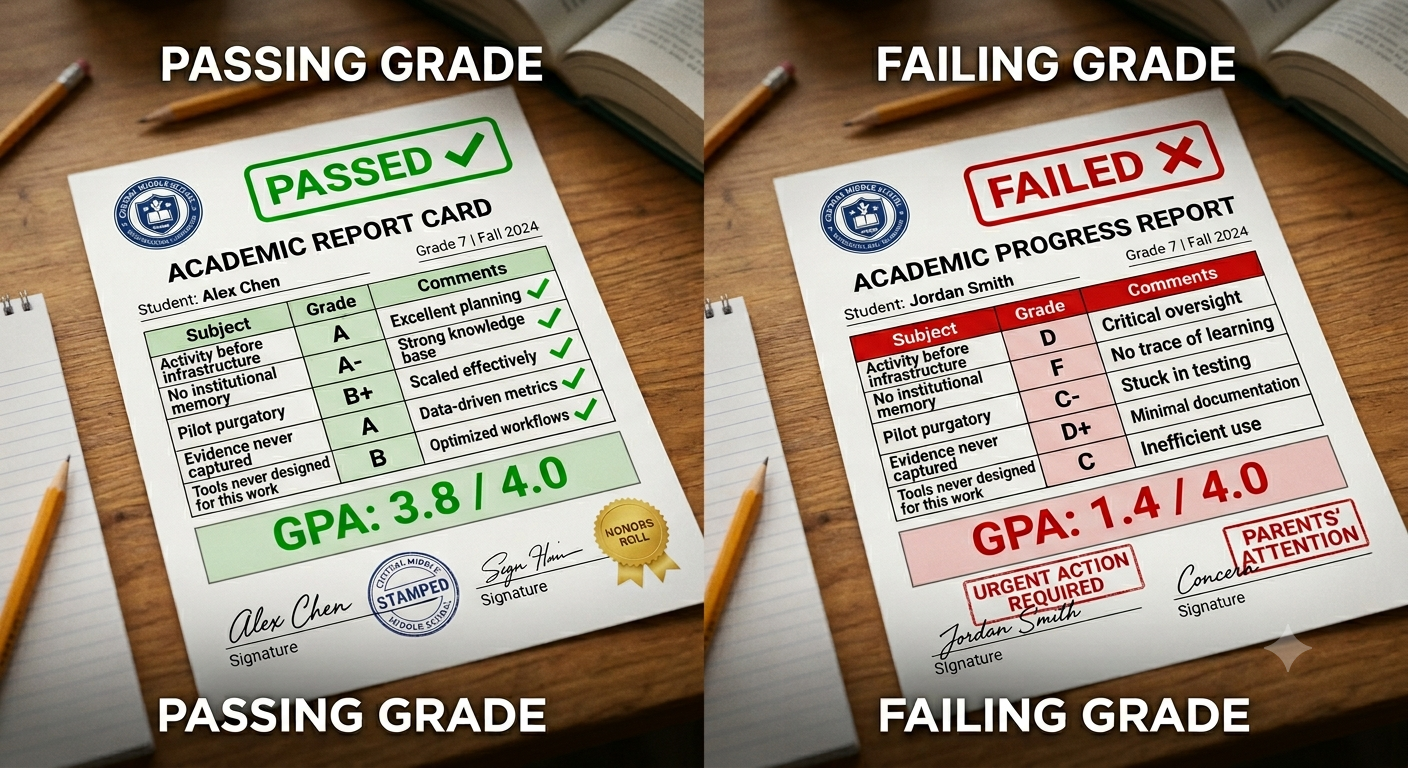

Most pilots fail in the same quiet, familiar way. The pilot technically works. Stakeholders interpret results differently. Operational teams hesitate. Ownership is unclear. Momentum fades. The pilot never formally ends — it just stops moving.

This is not a technology failure. It is a readiness failure. The organization never validated whether the solution was ready to operate outside the pilot environment. And because readiness was never assessed explicitly, nobody knows exactly what the blocker is — which means nobody knows what to fix.

The pilot sits in purgatory. The vendor waits for a decision. The innovation team moves on to the next pilot. The organization pays twice — once for the pilot budget and once for the opportunity cost of a technology that could have scaled but didn't.

Why Readiness Is Harder to Assess in 2026

Technology readiness has always mattered. What has changed is the environment in which it needs to be assessed.

AI lowers the barrier to working demos. Many solutions now look production-ready far earlier than they actually are. Polished interfaces and impressive outputs frequently mask unresolved integration, governance, and data access issues that only surface when the pilot tries to move into a real operating environment.

Business pressure compresses timelines. Stakeholders want faster pilots and quicker outcomes. Readiness questions get deferred rather than confronted — until they become blockers at the worst possible moment.

The cost of stalled pilots is higher than it has ever been. A failed pilot does not just waste budget. It erodes confidence in the innovation function with the business units who sponsored it, the vendors who invested time in the evaluation, and the leadership who funded the program.

Volume amplifies inconsistency. The informal judgment that worked when evaluating five pilots breaks down when evaluating fifty. Without a consistent readiness framework, assessments vary by evaluator — which means similar technologies receive different treatment in different business units.

The Five Most Common Reasons Pilots Fail After Proof of Concept

Across industries and technology categories, stalled pilots fail for a consistent set of underlying reasons. None of them are technical failures. All of them are readiness blind spots.

No operational owner is defined. The pilot has a sponsor, but no one owns it once experimentation ends. When the innovation team hands off, there is nobody on the business side accountable for what happens next.

Production data access is unresolved. Pilots frequently rely on clean, curated, or synthetic data. Production environments are messier — and the data access required to operate at scale turns out to be significantly more complex than it appeared during the pilot.

Security and compliance are engaged too late. Late-stage security reviews surface blockers that could have been identified weeks earlier with minimal effort. By the time they appear, the vendor relationship has been built on expectations the governance process cannot accommodate.

Success criteria are vague or subjective. When success is not defined in measurable terms before the pilot begins, every stakeholder sees a different outcome when it ends. No shared definition of success means no shared basis for a decision.

There is no defined path to scale. Pilots launch without clarity on integration work, operating costs, process change requirements, or ongoing support models. When the pilot concludes, the question of how to actually deploy the solution at enterprise scale has never been addressed.

These five failure modes are not inevitable. They are predictable — which means they are preventable if readiness is assessed before commitment is made rather than after problems surface.

Why Readiness Is Not Binary

A common mistake innovation teams make is treating readiness as a yes-or-no question. Ready or not ready. Enterprise-grade or not enterprise-grade. Too early or ready to pilot.

In reality readiness is contextual, gradual, and multi-dimensional. A technology can be ready for exploration but not for piloting. Ready for piloting but not for scaling. Ready for one business unit but not another. Ready on technical dimensions but not on governance or operational ones.

This is why blanket judgments — "too early," "not enterprise-ready," "needs more work" — often lack credibility with the teams receiving them. They do not explain which dimension of readiness is insufficient or what would need to change for the assessment to be different.

High-performing innovation teams treat readiness as something that evolves and something that can be assessed deliberately across specific dimensions over time — not as a binary state determined by a single reviewer's intuition.

What High-Performing Teams Assess Before Approving a Pilot

Teams that consistently move pilots to production validate the same core dimensions before committing resources — not after problems surface.

Business readiness: Is there a clearly defined problem owner? Is the problem material enough to justify organizational change? What specific decision will this pilot be designed to support?

Technical readiness: How will this integrate with existing systems? What dependencies exist? What happens to performance and reliability when volumes increase from pilot scale to production scale?

Operational readiness: Who owns this after the pilot? What process changes are required? What training or change management is needed for adoption?

Security and governance readiness: What data does the solution access? What security review will be required before production deployment? What compliance considerations apply? Has IT security been engaged?

Economic readiness: What are the unit economics at production scale? What is the baseline this solution needs to outperform? What is the business case for continued investment beyond the pilot?

Assessing these dimensions before a pilot begins does not eliminate uncertainty — it makes the remaining uncertainty explicit. And explicit uncertainty is manageable. Unexamined uncertainty is not.

👉 Try Traction AI free — technology scouting and trend reports, no demo call required

How to Build a Readiness Assessment Into Your Innovation Process

A readiness assessment is not a separate document or a separate step. It is most effective when it is built into the innovation workflow as a structured part of the gate process — applied consistently at the point where pilot commitment is being requested.

The assessment should be configured at the program level — same dimensions, same thresholds — so that similar technologies in similar categories are evaluated against the same baseline. This is what makes the outputs comparable and the decisions defensible.

When readiness assessment is connected to decision gates, the gate review starts from a structured picture of where the initiative is strong and where it has gaps — rather than from a presentation designed to make the strongest possible case for advancement. That changes the conversation from advocacy to evidence.

And when readiness assessments are captured as structured data across the portfolio, the patterns become visible: which dimensions consistently block scale in a particular technology category, which business units have the highest readiness at pilot entry, which vendors routinely have governance gaps that surface late. That portfolio intelligence is what allows programs to improve — not just react.

How This Connects to the Innovation Framework

The technology readiness gap is not an isolated failure. It is one stage in a chain of structural problems that starts at idea submission, runs through evaluation and governance, and culminates in the pilot-to-scale transition.

In the Traction Innovation Framework, readiness assessment is a defined stage — not an implicit assumption. The framework builds readiness evaluation across business, technical, operational, security, and economic dimensions into the workflow before pilot commitment is made, so the gap is identified and addressed rather than discovered after resources have been spent.

Download the Traction Innovation Framework guide →

Frequently Asked Questions

What is the technology readiness gap?

The technology readiness gap is the space between "this solution works in a controlled pilot" and "this solution can operate sustainably inside the enterprise" — the unresolved operational, governance, security, and ownership requirements that a successful proof of concept does not automatically satisfy. It is the most common reason innovation pilots fail to reach production despite technically succeeding.

Why do innovation pilots fail after proof of concept?

The five most common reasons are: no operational owner is defined for the post-pilot stage, production data access is more complex than the pilot revealed, security and compliance reviews surface blockers too late, success criteria were never defined in measurable terms, and there is no clear path to scale — no integration plan, no operating cost model, no change management approach. None of these are technology failures. All of them are readiness blind spots.

What is readiness in the context of innovation pilots?

Readiness is the multi-dimensional assessment of whether a technology is prepared to operate sustainably inside an enterprise — covering business readiness (ownership and problem materiality), technical readiness (integration and scalability), operational readiness (process change and adoption), security and governance readiness (compliance and data handling), and economic readiness (unit economics and business case). Readiness is not binary — it evolves across these dimensions as an initiative progresses.

When should readiness be assessed in the innovation process?

Readiness should be assessed before pilot commitment is made — not after problems surface. Applying readiness assessment at the gate before a pilot is approved makes the remaining uncertainty explicit and manageable. Deferring it until the pilot concludes means discoveries arrive at the worst possible moment — after resources have been committed and vendor expectations have been built.

How does readiness assessment connect to decision gates?

Readiness assessment provides the structured evidence that makes decision gates functional. A gate review that starts from a structured readiness picture — which dimensions are strong, which have gaps, what would need to change — is fundamentally different from a gate review that starts from a presentation designed to make the strongest case for advancement. The former produces decisions based on evidence. The latter produces decisions based on influence.

How does AI help with technology readiness assessment?

AI helps with readiness assessment by generating structured company profiles that surface integration complexity, security posture, and enterprise customer references before the assessment begins — reducing the research overhead of each readiness evaluation. AI also surfaces prior assessments of similar technologies, so the patterns that predict readiness gaps in a category are visible from the start of a new evaluation rather than discovered partway through.

Related Reading

- What Is an Innovation Management Framework? A Practical Guide for Enterprise Teams

- How to Design Innovation Decision Gates That Actually Work

- Decision Gates vs. Innovation Theater: How High-Performing Teams Turn Pilots Into Decisions

- Why Pilot Management Software Is the Missing Link in Innovation Execution

- From Pilots to Performance: Why Innovation Needs an Operating Model

- What Is Innovation Management? A Practical Definition for Enterprise Teams

About Traction Technology

Traction Technology is an AI-powered innovation management software platform trusted by Fortune 500 enterprise innovation teams. Built on Claude (Anthropic) and AWS Bedrock with a RAG architecture, Traction manages the full innovation lifecycle — from technology scouting and open innovation through idea management and pilot management — with AI-generated Trend Reports, AI Company Snapshots, automatic deduplication, and decision coaching built in.

Traction AI enables unlimited vendor discovery through conversational AI scouting — no boolean searches, no manual filtering, no analyst hours. With 50,000 curated Traction Matches plus full Crunchbase integration at no extra cost, zero setup fees, zero data migration charges, full API integrations, and deep configurability for each customer's unique workflows, Traction's innovation management platform gives enterprise innovation teams the intelligence and execution capability to turn innovation into measurable business outcomes. Recognized by Gartner. SOC 2 Type II certified.

Try Traction AI Free · Schedule a Demo · Start a Free Trial · tractiontechnology.com

.webp)