Innovation Management Platform for Open Innovation Programs

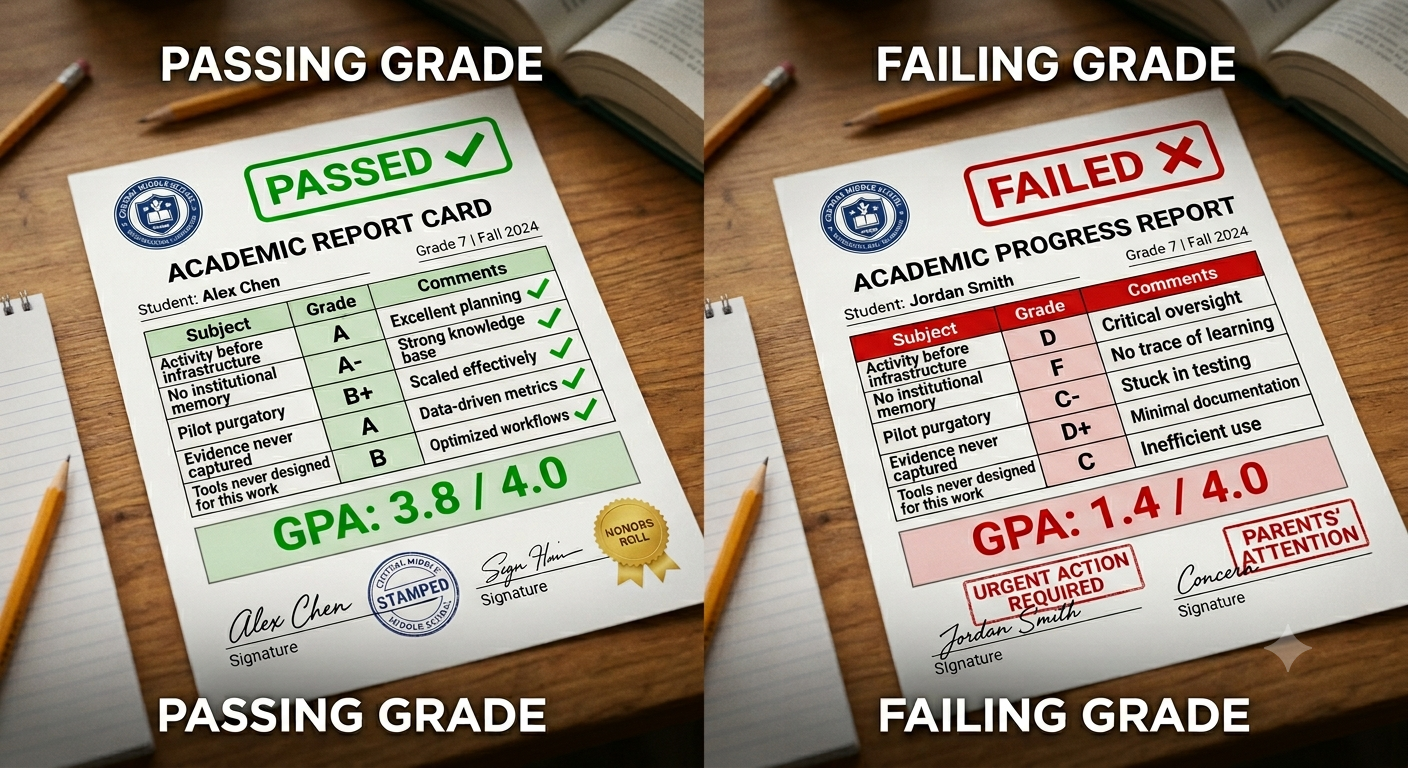

Most open innovation programs are being run on infrastructure that was never designed for the job.

A Head of Open Innovation at a global enterprise is managing challenge design, external submission intake, evaluation coordination across multiple business units, applicant communications, pilot pathway decisions, and program reporting — simultaneously, on a repeating cycle, with a team that is rarely large enough for the volume of work the program generates.

The tools they are using are a combination of whatever was available: a form builder for submissions, a spreadsheet for tracking, email for communication, a project management tool for pilot follow-up, and a slide deck for leadership reporting. Each tool handles one piece. None of them talk to each other. And when the program manager who built the system leaves, the institutional knowledge of how it all connects leaves with them.

This is not a small program problem. A global CPG company running six open innovation challenges per year has this problem at scale — six intake processes, six evaluation cycles, six sets of applicant communications, six pilot pathways, and six reporting obligations, each running in a partially manual, partially disconnected system that was not built to handle any of them end to end.

The question is not whether a purpose-built platform would be better. It obviously would. The question is whether the platform that claims to solve this problem actually solves all of it — or just replaces one of the point solutions while leaving the rest of the integration problem unsolved.

The Definition

An open innovation program is a structured, repeatable process through which an organization defines problems it cannot solve efficiently with internal resources, sources external solutions through challenges or solicitations, evaluates submissions through a governed workflow, advances qualifying submissions into pilots, and documents outcomes in a format that builds institutional memory across program cycles.

The word repeatable is the one that distinguishes a mature program from a one-time initiative. A single open innovation challenge is an event. A program is infrastructure — the same process, applied consistently, producing compounding organizational intelligence with every cycle.

The platform infrastructure has to support the program, not just the event. That distinction is what most Heads of Open Innovation are actually evaluating when they assess platforms.

For a comparison of open innovation platforms currently on the market and how to evaluate them, see: Why Open Innovation Platforms Matter and How to Pick the Best One

Why Point Solutions Fail Open Innovation Programs

The point solution problem is structural, not a matter of finding better individual tools.

Open innovation program management requires a continuous chain of connected operations: problem definition, challenge design, external promotion, submission intake, duplicate detection, evaluation coordination, applicant communication, pilot pathway management, outcome documentation, and program reporting. Each operation produces outputs that the next operation depends on.

When those operations run in separate tools, four things happen consistently.

Context breaks at every handoff. The submission record in the intake form does not automatically populate the evaluation workflow. The evaluation scores do not automatically inform the pilot setup. The pilot outcome does not automatically feed the program report. Every handoff requires manual work — exporting, reformatting, re-entering — that consumes time and introduces errors.

Institutional memory fragments. The challenge parameters from the last cycle are in one system. The evaluation rationale is in another. The pilot outcomes are in a project management tool that only the pilot team has access to. When the next challenge cycle begins, the team starts from partial information rather than from the complete record of what was learned.

Applicant experience suffers. External participants — startups, researchers, solution providers — are evaluating the organization as much as the organization is evaluating them. An intake process that feels disjointed, communication that is slow or inconsistent, and a pilot pathway that never materializes are signals that the organization is not serious about acting on what the program finds. The best applicants have options. They respond to programs that demonstrate operational competence.

Reporting is always retrospective and always manual. The program report is assembled after the fact by pulling data from multiple systems and reconciling inconsistencies. It measures activity — submissions received, evaluations completed, pilots launched — because that is what the disconnected systems can produce. It does not measure outcomes, because outcomes require connected data that the point solution architecture cannot generate.

What a Mature Open Innovation Program Actually Requires

The operational requirements of a mature open innovation program — one running multiple challenges per year across multiple business units — go well beyond what any single point solution can address.

Problem Definition and Challenge Design

Before a challenge launches, the organization needs to define the problem with enough specificity that external submissions can be meaningfully evaluated against it. Vague problem statements produce high submission volume and low evaluation quality. Specific problem statements with defined success criteria produce lower volume and dramatically higher signal.

The platform needs to support the problem definition process — capturing the strategic context, the business unit ownership, the evaluation criteria, and the intended pilot pathway — before the challenge goes live. This is the stage where most programs underinvest, and it is the stage whose quality most directly determines the program's outcomes.

External Submission Management

Open innovation challenges can receive hundreds or thousands of submissions. Without automated duplicate detection, structural similarity flagging, and intake routing, the evaluation team faces a volume problem before evaluation has even begun.

Traction's AI-powered duplicate detection identifies structurally similar submissions at intake — flagging companies or solutions that have been submitted before, evaluated before, or are substantially similar to current submissions — so the evaluation team focuses on genuinely distinct candidates rather than re-evaluating the same solutions under different packaging.

Consistent Evaluation Across Business Units

The evaluation problem in multi-business unit open innovation programs is consistency. When the same submission is evaluated by different teams with different criteria, the outputs are not comparable and the decisions are not defensible. When a submission that advanced in one business unit's evaluation was rejected by another's, the program cannot explain why — and the applicant cannot understand what happened.

Consistent evaluation criteria configured at the program level — the same framework applied across all evaluations in a challenge cycle — is what makes multi-business unit programs governable. Every evaluator works from the same criteria. Every score reflects the same dimensions. The portfolio of evaluations is comparable. And the decision to advance or stop a submission is defensible to the applicant and to leadership.

Pilot Pathway Management

The most common failure mode in open innovation programs is not a bad challenge or a weak applicant pool. It is the absence of a prepared pilot pathway for qualifying submissions.

A submission advances through evaluation, receives positive internal feedback, gets designated as a priority — and then waits six months for someone to figure out how to run a pilot with an external company. The governance, the commercial terms, the integration requirements, the stakeholder alignment — none of it was prepared in advance, because the program was designed to manage the front end of the process but not the back end.

Purpose-built pilot management connected to the evaluation workflow is what closes this gap. When a submission advances through evaluation, it moves directly into a pilot setup workflow in the same platform — with the full evaluation record visible to the pilot team, the success criteria defined, the stakeholder ownership assigned, and the governance process initiated. The handoff that normally loses momentum becomes a structured next step.

This is the capability that most directly separates Traction from every other platform in this category. Every other platform manages submissions through evaluation — and then requires the organization to move qualifying submissions into a separate tool to run the pilot. That handoff is where open innovation programs lose momentum and where institutional memory breaks down.

Applicant Communication and Relationship Management

External participants in open innovation programs are organizational assets — even the ones that do not advance. A startup that submitted a solution that was not the right fit for this challenge may be exactly the right fit for a challenge in eighteen months. A researcher whose submission did not advance may be a collaboration partner for a different program.

The program that communicates clearly, provides specific feedback, and maintains the relationship after a non-advance decision builds a reputation in the external innovation ecosystem that makes future challenges more productive. The program that goes silent after evaluation does the opposite.

The platform needs to support structured applicant communication — status updates, feedback delivery, and relationship maintenance — as a workflow output rather than a manual task the program manager has to remember to do.

Program Reporting Connected to Outcomes

The open innovation program report that leadership actually wants is not a count of submissions received and evaluations completed. It is a demonstration that the program is producing qualified pilots, that those pilots are advancing toward scale decisions, and that the investment in the program is producing business outcomes.

That report requires data that only exists if the platform captures it throughout the lifecycle — challenge parameters, evaluation scores and rationale, pilot setup details, milestone progress, and outcome codes. When that data exists as structured, connected records, the program report is generated from the platform rather than assembled manually from disconnected sources.

For a detailed guide on which metrics open innovation programs should track and how to present them to leadership, see: Essential KPIs for Open Innovation Teams

Why One Platform at One Price Changes the Equation

The point solution architecture is not just operationally inefficient — it is financially inefficient. A Head of Open Innovation managing six challenge cycles per year across a combination of form builders, spreadsheet trackers, project management tools, and reporting templates is paying for multiple subscriptions, spending significant time on integration overhead, and accepting a level of institutional memory loss that the program cannot recover.

The total cost of the point solution architecture — subscription fees, integration time, manual reconciliation, knowledge loss — is almost always higher than the cost of a purpose-built platform that handles the full lifecycle in a single system.

Traction's pricing is one subscription covering the full innovation lifecycle — open innovation challenges, technology scouting, idea management, pilot management, and portfolio reporting — with no setup fee, no data migration charges, and no per-module pricing that makes the full capability unaffordable. One platform. One price. Fully configurable to the specific workflows of your program.

The configurability matters as much as the coverage. Every open innovation program has its own challenge design conventions, evaluation criteria structures, pilot governance requirements, and reporting formats. A platform that forces programs into a predetermined workflow creates as many problems as the point solutions it replaces. Traction configures to how your program actually works — not to how the platform assumes all programs work.

How Traction Runs an Open Innovation Program End to End

Stage 1 — Problem Definition.The business unit or program team defines the challenge — the problem statement, the strategic context, the evaluation criteria, and the intended pilot pathway. Traction structures this information as the foundation for every subsequent stage, so the evaluation and pilot teams always have access to the original intent of the challenge.

Stage 2 — Challenge Launch and Promotion.The challenge goes live with a defined submission window, a structured intake form, and Traction's external-facing challenge portal. Applicants submit through a professional, branded experience that reflects the seriousness of the program.

Stage 3 — Submission Intake and Deduplication.Submissions are captured in Traction as structured records. AI-powered duplicate detection flags similar submissions and prior evaluations. The evaluation queue is organized by challenge, category, and similarity cluster — so the evaluation team starts from a manageable, structured set rather than a raw volume problem.

Stage 4 — Evaluation Coordination.Evaluators across business units work from the same criteria in the same platform. Scores are captured as structured data. Rationale is documented. The evaluation record is complete and comparable across all submissions in the cycle.

Stage 5 — Pilot Pathway.Qualifying submissions move directly into a pilot setup workflow in Traction — same platform, connected record, full evaluation context visible to the pilot team. Success criteria are defined. Ownership is assigned. Governance is initiated. The momentum from evaluation carries into pilot execution rather than dissipating in a handoff gap.

Stage 6 — Outcome Documentation and Program Reporting.Every pilot closes with a structured outcome record. The program report is generated from connected data — challenge parameters, evaluation scores, pilot outcomes, scale decisions — in a format that demonstrates program value in business terms.

When the next challenge cycle begins, the institutional memory from every prior cycle is accessible in the platform. The team starts smarter, not from scratch.

Frequently Asked Questions

What is an open innovation program?

An open innovation program is a structured, repeatable process through which an organization defines problems it cannot solve efficiently with internal resources, sources external solutions through challenges or solicitations, evaluates submissions through a governed workflow, advances qualifying submissions into pilots, and documents outcomes in a format that builds institutional memory across program cycles. The distinction between a program and a one-time challenge is repeatability — the same infrastructure, applied consistently, producing compounding intelligence with every cycle.

What is an open innovation management platform?

An open innovation management platform is software that manages the full lifecycle of an open innovation program — from challenge design and external submission intake through evaluation coordination, pilot pathway management, applicant communication, and program reporting — in a single connected system. The key distinction from point solutions is that all stages share a connected data record, so institutional memory accumulates rather than fragmenting across disconnected tools.

Why do open innovation programs fail?

The most common failure modes are: vague problem statements that produce high submission volume and low evaluation quality, inconsistent evaluation criteria that make multi-business unit assessments incomparable, the absence of a prepared pilot pathway for qualifying submissions, and point solution architectures that lose institutional memory at every handoff. Programs that produce outcomes consistently have explicit problem definition, standardized evaluation, connected pilot governance, and a platform that captures the full record of every program cycle.

What should a Head of Open Innovation look for in a platform?

End-to-end coverage of the full program lifecycle in a single system, AI-powered duplicate detection and intake management, configurable evaluation workflows that accommodate the specific criteria of your program, connected pilot management that eliminates the handoff gap between evaluation and execution, structured applicant communication, and portfolio reporting that demonstrates program value in business outcomes rather than activity metrics. Pricing should be one subscription covering the full lifecycle with no setup fee and no per-module pricing.

How does Traction handle multiple open innovation challenges simultaneously?

Traction supports multiple concurrent challenges, each with its own problem statement, evaluation criteria, submission intake, and pilot pathway — all within the same platform and the same institutional memory. Program managers have portfolio-level visibility across all active challenges, with challenge-level detail available on demand. Evaluation teams in different business units work from challenge-specific criteria while the program manager maintains cross-challenge visibility and reporting.

How does Traction connect open innovation to pilot management?

When a submission advances through evaluation in Traction, it moves directly into a pilot setup workflow in the same platform — with the full evaluation record visible to the pilot team, the success criteria defined from the evaluation stage, stakeholder ownership assigned, and governance initiated. There is no handoff to a separate tool, no context lost in the transition, and no momentum gap between evaluation and execution. This connected workflow is the most significant operational difference between Traction and platforms that manage submissions through evaluation only.

What does Traction cost for an open innovation program?

Traction is one subscription covering the full innovation lifecycle — open innovation, technology scouting, idea management, pilot management, and portfolio reporting — with no setup fee, no data migration charges, and no per-module pricing. The platform is fully configurable to your program's specific workflows without implementation consulting fees.

How is Traction different from other open innovation platforms?

The primary differentiator is end-to-end coverage in a single platform at one price. Most open innovation platforms manage the front end of the process — challenge design, submission intake, evaluation — and then require the organization to manage pilot execution in a separate tool. That handoff is where programs lose momentum and institutional memory. Traction connects evaluation directly to pilot management in the same system, with no handoff gap, no separate tool, and no context lost in the transition. Combined with no setup fee, no data migration charges, and full configurability, this is the operational case that resonates most directly with Heads of Open Innovation who have lived the point solution problem.

Related Reading

- What Is Open Innovation? A Practical Guide for Enterprise Teams

- Why Open Innovation Platforms Matter and How to Pick the Best One

- Essential KPIs for Open Innovation Teams

- AI for Open Innovation: Key Strategies for Scouting Technologies and Matching Startups

- Why Pilot Management Software Is the Missing Link in Innovation Execution

- What Is an Innovation Management Framework? A Practical Guide for Enterprise Teams

- The Hidden Innovation Bottleneck: Idea Submission Without Context

- Innovation Management for R&D Teams

- What Is Innovation Management? A Practical Definition for Enterprise Teams

About Traction Technology

Traction Technology is an AI-powered innovation management software platform trusted by Fortune 500 enterprise innovation teams including Armstrong, Bechtel, Ford, GSK, Kyndryl, Merck, and Suntory. Built on Claude (Anthropic) and AWS Bedrock with a RAG architecture, Traction manages the full innovation lifecycle — from technology scouting ( one million plus companies ) and open innovation through idea management, RFI management, and pilot management — with AI-generated Trend Reports, AI Company Snapshots, duplication detection, and decision coaching built in.

One annual subscription at $4,000 gives you the full capabilities of an enterprise innovation team — every module, every AI capability, and unlimited View-Only access for every stakeholder at no additional cost. No setup fee. No data migration charges. Recognized by Gartner. SOC 2 Type II certified.

Try Traction AI Free · View Pricing · Schedule a Demo ·

About the Author

Neal Silverman is the Co-Founder and CEO of Traction. He has spent 25 years watching large enterprises struggle to collaborate effectively with startup ecosystems — not because the technologies aren't promising, but because most startups aren't ready to meet the demands of enterprise scale. Before Traction, he spent 15 years producing the DEMO Conference for IDG, where he evaluated thousands of early-stage companies and watched the best ideas stall at the enterprise door. That problem became Traction. Today he works with innovation teams at GSK, PepsiCo, Ford, Merck, Suntory, Bechtel, USPS, and others to help them institutionalize open innovation programs and build the infrastructure to scout, evaluate, and scale emerging technologies. Connect with Neal on LinkedIn.

.webp)