From Scouting to Scale: How Innovation Teams Evaluate Emerging Technologies

Updated March 2026

Innovation teams have never had more access to ideas, startups, and emerging technologies.

They have also never had a harder time answering the questions that actually matter:

- What is real versus noise?

- What is ready versus interesting?

- What is worth backing versus worth watching?

- What should we stop before we waste a quarter?

In 2026 the challenge is not finding innovation. It is making consistent, defensible decisions at scale — across ideas, vendors, and pilots — without slowing the organization down.

If you are an innovation manager, open innovation lead, or CIO, you already know the pattern. The pipeline fills quickly but decisions lag. Pilots start, momentum fades, outcomes get murky. Everyone is busy. Few things make it to production.

This post is a practical framework for how high-performing teams evaluate emerging technologies — and why the best innovation programs are shifting from idea management to decision management.

The Definition

Emerging technology evaluation is the structured process of assessing ideas, vendors, and technologies against defined criteria at each stage of the innovation lifecycle — from initial scouting through pilot validation and scale readiness — in a way that is consistent across evaluators, defensible to stakeholders, and connected to measurable business outcomes.

The shift from informal judgment to structured evaluation is not about adding bureaucracy. It is about making the decisions that innovation programs are already making faster, more consistent, and more defensible — so that the program earns and keeps the organizational credibility it needs to continue operating.

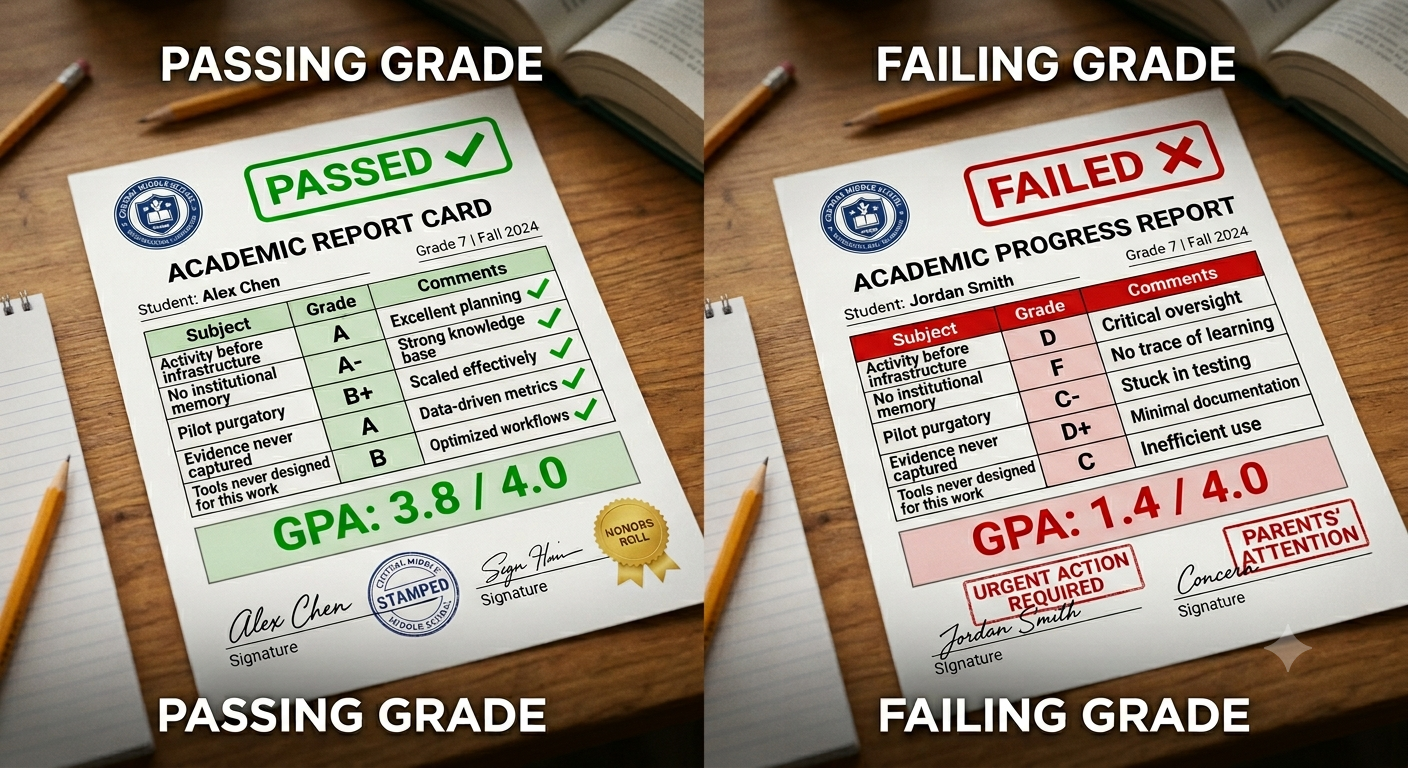

The Uncomfortable Truth: Most Innovation Programs Don't Fail at Innovation

They fail at evaluation.

Plenty of organizations can generate ideas, run challenges, meet startups, and launch pilots. What breaks is the ability to evaluate consistently when volume rises.

The symptoms are familiar: too many inputs and not enough decisions, evaluation criteria that change depending on who is in the room, pilots that start fast and stall without a clear conclusion, stakeholder alignment that arrives late after time and credibility are already spent, and compelling narratives supported by weak evidence.

This is not a motivation problem. It is a systems problem. And the system breaks because most evaluation processes are built on one assumption that no longer holds — that expert judgment, informal reviews, and intuition can scale.

They cannot. Not in an environment where a single technology category includes dozens of vendors, multiple architectural approaches, and capability shifts that move faster than any manual monitoring process can track.

Why Evaluation Is Harder in 2026

The signal-to-noise ratio is worse. AI has dramatically lowered the cost of producing credible-looking vendors, decks, demos, and prototypes. More solutions look polished earlier than they ever did. That does not mean they are enterprise-ready. It means the gap between looking ready and being ready has never been wider.

The time-to-pilot expectation is shorter. Business stakeholders have less patience for long evaluation cycles. The pressure to move from interest to pilot in weeks rather than months is real and is not going away.

The cost of a wrong pilot is higher. A failed pilot does not just consume budget. It burns stakeholder trust and crowds out bandwidth for better bets. The opportunity cost of a poorly evaluated pilot is the well-evaluated one that never got the resources.

The number of decisions has exploded. Evaluation is no longer a single decision made once for a technology category. It is a continuous decision process across dozens of vendors, multiple use cases, and fast-moving capability changes. The informal processes that worked for five evaluations per year do not work for fifty.

The Shift That Separates High-Performing Teams

Most organizations still measure innovation by activity — ideas submitted, startups reviewed, pilots launched, workshops run. Those metrics are not useless but they are incomplete. They measure motion, not direction.

High-performing teams measure innovation by decision quality:

- Time from scouting to decision

- Percentage of pilots with defined success criteria before launch

- Decision consistency across evaluators and business units

- Percentage of pilots that produce a clear outcome — scale, stop, or watch

- Repeatability of the evaluation process across technology categories

Activity can be outsourced. Decision quality cannot. And decision quality is what earns innovation teams the organizational credibility that sustains long-term investment.

The Innovation Pipeline Is Not a Funnel — It Is a Series of Decisions

Most pipelines are treated as funnels where initiatives move forward if they are not rejected. That framing causes two problems. Teams optimize for throughput rather than quality. And "not rejected" becomes effectively the same as "approved."

A more accurate framing: your pipeline is a sequence of decision points, each designed to reduce uncertainty by answering a specific question before the next level of commitment is requested.

Each decision point asks a different question. Each requires different evidence. Each produces a different type of outcome.

This is what prevents pilots from becoming a default next step and what prevents the pipeline from filling with initiatives that have no clear path to a conclusion.

The Three-Layer Evaluation Stack

Think of emerging technology evaluation as three distinct layers. Mixing them is what causes confusion — applying the wrong type of evaluation to the wrong stage, or using the same criteria for a first-pass assessment that you would use for a scale decision.

Layer 1: Relevance — Is This Worth Attention?

This is where scouting happens. The goal is triage, not certainty. You are answering: is this technology solving a real problem that matters to the business, and is there credible signal that it is viable?

The evaluation at this stage is intentionally lightweight — a structured scan that surfaces relevant vendors and filters out noise without investing significant resources in companies that will not survive initial screening. AI-powered scouting is most valuable here — it extends the reach of the triage process beyond what any manual monitoring effort can cover.

What you produce: A qualified shortlist with enough context — company profiles, funding signals, customer references, technology approach — to make a relevance decision quickly.

What you do not need yet: Integration analysis, security review, detailed ROI modeling, or stakeholder alignment. Applying late-stage rigor at the triage stage kills exploration.

Layer 2: Readiness — Is This Enterprise-Ready for a Pilot?

This is where evaluation happens in depth. The goal is to assess whether a shortlisted technology is ready to operate inside a pilot environment — not whether it is ready to scale, but whether the remaining unknowns are operational and organizational rather than fundamental.

The evaluation at this stage covers five dimensions: business readiness (problem ownership and materiality), technical readiness (integration and scalability), operational readiness (process change and adoption requirements), security and governance readiness (compliance, data handling, IT requirements), and economic readiness (unit economics and business case plausibility).

What you produce: A structured assessment across all five dimensions, with explicit identification of gaps and a recommended next action — advance to pilot, redirect to address specific gaps, or stop.

What you do not need yet: Final scale commitment or full production deployment planning. The readiness assessment informs the pilot decision, not the deployment decision.

Layer 3: Validation — Did the Pilot Answer Its Question?

This is where pilots are evaluated — not based on whether the technology performed impressively but based on whether the pilot answered the specific question it was designed to answer.

A pilot that was designed to test whether a technology could integrate with a legacy system answers a different question from one designed to test whether end users would adopt it. Evaluating both against the same criteria produces inconsistent outcomes. Evaluating each against its own defined success criteria produces decisions.

What you produce: A structured outcome — scale, stop, extend with specific changes, or redirect to a different use case — with documented rationale that feeds the institutional memory of the program.

What this requires: Success criteria defined before the pilot begins, not after it ends. This is the single most common process failure in innovation evaluation and the one with the most direct impact on pilot-to-scale conversion rates.

👉 Try Traction AI free — technology scouting and trend reports, no demo call required

The Most Common Evaluation Mistakes — and How to Fix Them

Applying the wrong layer at the wrong stage. Demanding full integration analysis at the triage stage kills exploration. Running lightweight relevance assessments at the pilot validation stage produces decisions without sufficient evidence. Matching evaluation depth to stage is the foundation of a functional evaluation process.

Changing criteria between evaluations. When similar vendors in the same category are evaluated against different criteria by different reviewers, the outputs are not comparable and the decisions are not defensible. Criteria should be defined at the program level and applied consistently — not reconfigured for each evaluation.

Evaluating without defined success criteria. A pilot that begins without measurable success criteria cannot produce a clear outcome. This is the most common and most expensive evaluation mistake. Before any pilot begins, the team should be able to answer: what would a successful result look like, and what would a result that does not justify scale look like?

Keeping evaluations in individuals' heads. When evaluation rationale lives in email threads and personal notes rather than a structured platform, it is not available to the next person who evaluates a similar technology. The organization pays for the same learning twice. Capturing every evaluation as structured, retrievable data is the foundation of institutional memory.

Mistaking a compelling narrative for evidence. In fast-moving technology categories, vendor presentations have never been more polished. A compelling demo is not evidence of enterprise readiness. Structured evaluation criteria that assess actual operational requirements — rather than presentation quality — is the protection against this failure mode.

How This Connects to the Innovation Framework

The three-layer evaluation stack is built into the Traction Innovation Framework as a connected progression from scouting through readiness assessment to pilot validation — with consistent criteria applied at each layer, structured outputs captured as institutional memory, and decision gates that produce explicit outcomes rather than deferred recommendations.

Download the Traction Innovation Framework guide →

Frequently Asked Questions

What is emerging technology evaluation?

Emerging technology evaluation is the structured process of assessing ideas, vendors, and technologies against defined criteria at each stage of the innovation lifecycle — from initial scouting through pilot validation and scale readiness — in a way that is consistent across evaluators, defensible to stakeholders, and connected to measurable business outcomes.

Why is consistent evaluation important in innovation?

Consistent evaluation ensures that similar technologies in similar categories are assessed against the same criteria by the same framework — regardless of who is doing the evaluating or which business unit is sponsoring the initiative. Without consistency, evaluation outputs are not comparable, decisions are not defensible, and the portfolio view of the program cannot be trusted.

What are the three layers of innovation evaluation?

The three layers are relevance assessment (triage — is this technology worth attention?), readiness assessment (depth — is this technology ready for a pilot?), and pilot validation (conclusion — did the pilot answer its question?). Each layer requires different evidence, produces different outputs, and supports a different type of decision. Mixing them — applying the wrong layer at the wrong stage — is one of the most common sources of evaluation failure.

What are the most common innovation evaluation mistakes?

The five most common mistakes are: applying the wrong evaluation depth at the wrong stage, changing criteria between evaluations of similar technologies, running pilots without defined success criteria, keeping evaluation rationale in individual heads rather than a structured platform, and mistaking compelling vendor narratives for evidence of enterprise readiness.

How does AI improve emerging technology evaluation?

AI improves evaluation primarily at the triage layer — enabling conversational vendor discovery that surfaces relevant companies faster and more comprehensively than manual research. AI also generates structured company profiles and trend reports that reduce research overhead at the readiness assessment layer, and surfaces prior evaluations of similar technologies so teams are not starting from zero.

What is the difference between idea management and decision management?

Idea management focuses on capturing and organizing ideas — the front end of the innovation process. Decision management focuses on producing consistent, defensible decisions at every stage of the lifecycle — from relevance assessment through pilot validation and scale commitment. High-performing innovation programs treat idea management as one input to a decision management system, not as the primary output of the innovation function.

Related Reading

- What Is an Innovation Management Framework? A Practical Guide for Enterprise Teams

- The Technology Readiness Gap: Why Most Innovation Pilots Fail Before They Reach Production

- Readiness Is Not Binary: Why "Too Early" Is the Wrong Answer in Innovation

- How to Design Innovation Decision Gates That Actually Work

- How AI Is Transforming Technology Scouting: A Practical Guide for Enterprise Teams

- Why Pilot Management Software Is the Missing Link in Innovation Execution

About Traction Technology

Traction Technology is an AI-powered innovation management software platform trusted by Fortune 500 enterprise innovation teams including Armstrong, Bechtel, Ford, GSK, Kyndryl, Merck, and Suntory. Built on Claude (Anthropic) and AWS Bedrock with a RAG architecture, Traction manages the full innovation lifecycle — from technology scouting ( one million plus companies ) and open innovation through idea management, RFI management, and pilot management — with AI-generated Trend Reports, AI Company Snapshots, duplication detection, and decision coaching built in.

One annual subscription at $4,000 gives you the full capabilities of an enterprise innovation team — every module, every AI capability, and unlimited View-Only access for every stakeholder at no additional cost. No setup fee. No data migration charges. Recognized by Gartner. SOC 2 Type II certified.

Try Traction AI Free · View Pricing · Schedule a Demo · tractiontechnology.com

About the Author

Neal Silverman is the Co-Founder and CEO of Traction. He has spent 25 years watching large enterprises struggle to collaborate effectively with startup ecosystems — not because the technologies aren't promising, but because most startups aren't ready to meet the demands of enterprise scale. Before Traction, he spent 15 years producing the DEMO Conference for IDG, where he evaluated thousands of early-stage companies and watched the best ideas stall at the enterprise door. That problem became Traction. Today he works with innovation teams at GSK, PepsiCo, Ford, Merck, Suntory, Bechtel, USPS, and others to help them institutionalize open innovation programs and build the infrastructure to scout, evaluate, and scale emerging technologies. Connect with Neal on LinkedIn.

.webp)