Building an agenda for the critical challenges and opportunities in AI

This is the fourth Gaining Traction in AI post. Earlier posts are Gaining Traction in AI: The Economics of AI, Machine Learning and Data: AI is the Engine and Data is the Fuel, and Standing in the Shadow of AI. A final planned post will be AI Hardware: Gearing Up for an AI-Everywhere Economy.

Startup of the Week: Rethink Robotics

Rethink Robotics helps manufacturers meet the challenges of an agile economy with an integrated workforce, combining trainable, safe and cost-effective robots with skilled labor. Its Baxter robot, driven by Intera, an advanced software platform, gives world-class manufacturers and distributors in automotive, plastics, consumer goods, electronics and more, a workforce multiplier that optimizes labor. With Rethink Robotics, manufacturers increase flexibility, lower costs and can invest in skilled labor—all advantages in fueling continuous innovation and sustainable competitive advantage.

Why We Picked It: Rethink has a vision of robots that can collaborate with human workers, and be quickly reconfigured to undertake new tasks, a must in an agile manufacturing economy. Customers include GE Oil & Gas, DHL, and Steelcase.

Website: http://www.rethinkrobotics.com Location: Boston, MA Company Size: ~250 Click here to view more details.

Trust and Transparency

There’s a well-documented issue with trusting AI, since the inner workings aren’t generally transparent, and in many cases, they can’t be interrogated to explain their ‘reasoning’ or demonstrate their decision-making process. Partly this is due to how the apps are designed, and some of it is due to the nature of ‘deep learning’: neural-network based solutions where AI is trained to perform some task — such as differentiating chihuahuas from blueberry muffins — they aren’t following a checklist or clearly defined algorithm. The response is learned just as a human child might learn.

Dog or Muffin?

Ok. You explain how you know which are dogs and which are not. You. Just. Know. (PS I wondered if many chihuahuas are named Muffin: it’s in the top 100 for she-chihuahuas.)But as AIs take the wheel of our cars, diagnose cancer, suggest who should get paroled, or make billion-dollar investments for hedge funds, people are less accepting of ‘who the hell knows how they do it.’ But as Will Knight recently pointed out, as a general rule it may not be feasible to understand what AI is up to, any more than we understand how people make decisions:

There’s already an argument that being able to interrogate an AI system about how it reached its conclusions is a fundamental legal right. Starting in the summer of 2018, the European Union may require that companies be able to give users an explanation for decisions that automated systems reach. This might be impossible, even for systems that seem relatively simple on the surface, such as the apps and websites that use deep learning to serve ads or recommend songs. The computers that run those services have programmed themselves, and they have done it in ways we cannot understand. Even the engineers who build these apps cannot fully explain their behavior.

Knight goes on to make the argument that people haven’t made systems before that we don’t fully understand. That’s not true: the reality is that a great deal of the software we rely on a day-to-day basis is too complex for its developers — and definitely its users — to understand, as James Somers pointed out in The Coming Software Apocalypse:

It’s been said that software is “eating the world.” More and more, critical systems that were once controlled mechanically, or by people, are coming to depend on code. This was perhaps never clearer than in the summer of 2015, when on a single day, United Airlines grounded its fleet because of a problem with its departure-management system; trading was suspended on the New York Stock Exchange after an upgrade; the front page of The Wall Street Journal’s website crashed; and Seattle’s 911 system went down again, this time because a different router failed. The simultaneous failure of so many software systems smelled at first of a coordinated cyberattack. Almost more frightening was the realization, late in the day, that it was just a coincidence.

And none of those systems involved AI in any way. AI is just the newest form of hypercomplex and inscrutable software to arrive on the scene. Any system of sufficient complexity has unknowable behaviors, with classic examples like Conway’s Game of Life, a game based on a small number of simple rules that lead to unpredictable outcomes.Nonetheless, the point about understanding AI stands: AIs are going to be increasingly less predictable as they grow in power, speed, and range of application, and we are unlikely to grow better at understanding them. And, since they act something like people, we also have to contend with AIs inheriting our cognitive biases, and how to sidestep that can of worms.My bet is we will accept a middle option. Rather than having to make all AIs transparent — which may be impossible, anyway — we’ll simply require them to simulate their behavior in a test or simulation setting for some number of hours. Like a driver’s test, except maybe a few months long.Ultimately we may need to create a class of AIs to monitor other AIs, looking for and stepping in when behaviors stray outside of some acceptable range, kind of like what police do in democratic, open societies. It’s the crazy guy taking his clothes off and swearing at the top of his voice at the ball game that gets attention, and not the great majority of folks watching the game, even if they are shouting.

What to Watch For

- We can expect a quickly shifting regulatory environment, with increased acceptance of some aspects of AI, like Trump’s new car testing rules and Cuomo’s NYC announcements, that seem to open the floodgates to live on-the-ground acceptance of driverless vehicles.

- On the other hand, growing concern about algorithms and AI in media, government, and in business may lead to heightened scrutiny, like the ‘fake news’ epidemic in social media, and the possibilities of hardwired bias in algorithms used to evaluate prisoners for parole, or determining who to hire and promote in the corporation.

- Will the use of AI in cybersecurity — in both attack and defense — change perceptions about the absolutely essential role of AI in today’s world, perhaps just as much as driverless cars will?

- AI in financial sector demonstrates that absolute provability is less important that simply outpacing human capabilities in the real world. No one is demanding proof of Alpha Go’s logic when it beats the best human — and AI — chess players, and the best other AIs: it is self-evidently better.

Results of Attitudes Toward AI Survey

We set up a survey in late 2017 to find out readers attitudes about AI. Here are the results and some observations.

question 1

I was surprised that 60% believe AI is ‘just the logical next step’ in what computers can do. I fall into the evolutionary leap group. As just one example, we’ve had cruise control on cars since the ’50s, but the appearance of autonomous cars doesn’t seem like a linear trend, but a disruptive jump from mechanical to cognitive technologies.

question 2

Almost 15% believe investments in AI will crash or have peaked. I guess there are still some naysayers left, although I would have thought the story about Alpha Go learning how to play chess and beating the previously strongest chess playing AI in less than four hours would have convinced anyone.

question 3

There is actually an argument that we should have included an option of ‘already has’, considering the number of robots being deployed in manufacturing. I fall into the 2 to 3 years camp for ‘major impact on the economy’, although some industries or niches are being hit already, like the impact on hiring of first-year associates by law firms due to AI (see this MIT Technology Review piece, Lawyer-Bots Are Shaking Up Jobs. When AI can do in seconds what formerly took newly-minted lawyers 360,000 hours, look out. And that’s this year.)

question 4

Interesting that our survey panel, in general, believe that their own industry is likely to be hit by the impacts of AI more quickly that the economy as a whole! In hindsight, I think we should have asked what industries our panel work in, and we could have cross-correlated. But it might not have mattered: this sense that we, and our own industries, are personally threatened by AI in the near-term may be a widespread and shared fear, and everyone believing that threat is less in other industries. The ‘grass is always greener’, in reverse?

question 5

On reflection, we might have asked ‘which industry do you think will be most impacted by AI over the next 3–5 years?’ because ‘benefit’ is a slippery concept. I think the rise of driverless vehicles should put transportation higher, but we haven’t really experienced much of that yet. I bet this chart will look very different if we ask the same question in two years.

question 6

Once you start to imagine that cybersecurity, programming, and dev ops may turn out to be areas that AI could excel in, I think you logically begin to conclude that a greater proportion of IT cash would flow toward AI. My bet is that 20% and below will seem very small in a few years.

Final Takeaway

The one line takeaway from this survey: our panel clearly agrees that AI will have a major impact on the economy in the near term, investment in the sector will continue, and they anticipate (fear?) the disruption that AI will have on their own industry. Regarding IT investments, AI will be claiming a larger share of the pie in the future, if not the whole thing.

How can Traction Technology help?

Traction Technology is a ground-breaking platform engineered expressly to eliminate internal innovation silos, thereby enabling enterprises to seamlessly collaborate and align their business needs with promising technologies. By providing dynamic features that promote collaboration and innovation, they aim to accelerate digital transformation in the enterprise.

Here's how Traction Technology can help:

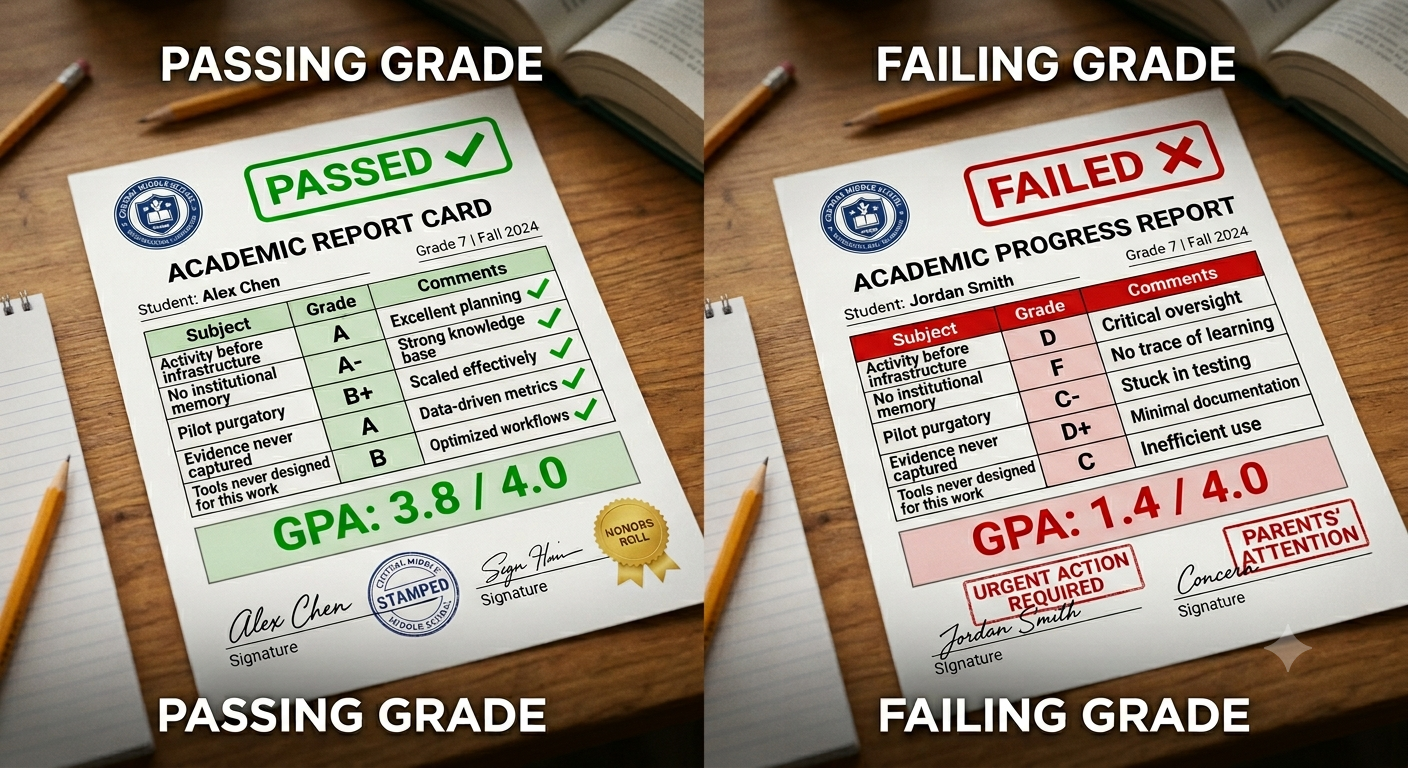

.png)

Discovery of Relevant Startups: Traction Technology helps established companies discover relevant advanced technologies aligned with their strategic goals and innovation areas. It curates startups based on different industries, technology trends, and areas of business interest, making it easier to find potential partners or investment opportunities and share this information across the enterprise.

Collaboration and Engagement Tools: Traction Technology offers tools that help manage the engagement process with startups. It provides a structured approach to evaluating, tracking, and managing interactions with multiple startups across multiple project and pilots, improving efficiency and collaboration.

Data-Driven Insights: The platform provides data-driven insights to help make informed decisions. This includes information on startup funding, growth indicators, customers and competitors, which can help in assessing potential startup partnerships.

Innovation Pipeline Management: Traction Technology aids in managing the innovation pipeline. It helps companies capture ideas and request and track innovation projects, monitor progress, and measure results in real time, promoting a culture of continuous innovation.

Track KPIs and Generate Custom Reports: Effortlessly track Key Performance Indicators (KPIs) with real time dashboards and generate custom reports tailored to your organization's unique requirements. Stay

.png)

ahead of the curve by monitoring projects progress and engagement.

By leveraging a platform like Traction Technology, established companies can gain a competitive edge, driving their digital transformation journey and adapting to the fast-paced business environment. It supports the integration of startup agility, innovation, and customer-centric approach into their operations, which is critical for success in the digital age.

About Traction Technology

We built Traction Technology to meet the needs of the most demanding customers, empowering individuals and teams to accelerate and help automate the discovery and evaluation of emerging technologies. Traction Technology speeds up the time to innovation at large enterprises, saving valuable time and money by accelerating revenue-producing digital transformation projects and reducing the strain on internal resources, while significantly mitigating the risk inherent in working with early-stage technologies.

Let us share some case studies and see if there is a fit based on your needs.

Traction Report Update: 23 ways AI could transform your business in 2023.

For more information

● Explore our software and research services.

● Download our brochure: How to Evaluate Enterprise Startups.

● Watch a demo of our innovation management platform and start your free trial.

.webp)